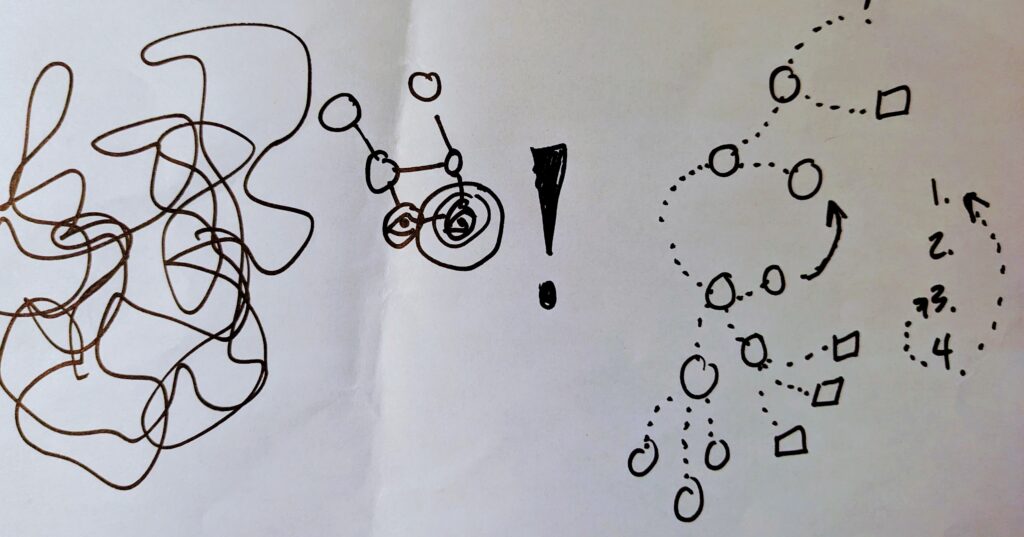

Last summer I was reading multiple books at the same time. (Well, you know, I don’t actually read them simultaneously!) Notably, two non fiction, which is not my common summer practice. Two standouts were Braiding Sweetgrass by Robin Wall Kimmerer and Everybody Come Alive by Marcie Alvis Walker. Serendipity in action. The writing of these two amazing women both brought, and continue to bring, me a deep, embodied sense of other ways of knowing. The ways different than the ones I use in my life, mostly without awareness. These books helped me to begin to notice the limitations of my own views and stances. And the importance of querying myself to understand where I’m coming from as a stepping stone to being open to others’.

This week another piece of writing, this time from Bayo Akomolafe came across my screen and this sense, this idea of our separateness and togetherness, of what we do or do not identify with and how we presume power over it or not jumped out again. He was asked to provide an alternative definition of nature than the Oxford English Dictionary and he wrote:

So, I offered this:

‘A theoretical, economic, political, and theological designation from the Enlightenment era that attempts to name the material world of trees, ecologies, animals, and general features and products of earth as separate from humans and human society, largely in a bid to position humans as masters over material forces, independent and capable of transforming the world for their exclusive ends.’

It’s as far as I could go without waxing poetic about nature as a colonial trope for biopolitical interventions. What felt important to say was that ‘nature’ is a performative, speculative gesture, a ritual of relations that rehearses a dissociation from the world. A subjectivizing force. A lounge in the terminal of the radioactive Human.

Now to notice how I am “performing…”